Analysis of Reasoning Trajectories - Comparing Closed Weight Models vs Open Weight Models - Claude Sonnet 4 vs Kimi K2 Instruct

Abstract

This study presents a comprehensive analysis of SWE-agent trajectories comparing Kimi K2 Instruct and Claude Sonnet 4 performance on software engineering tasks from the SWE-bench dataset. Through detailed examination of action category distributions, Sankey diagrams, Markov transition patterns, and step count distributions, our goal was to identify distinct behavioral patterns that reveal differences in problem-solving approaches between these two large language models when applied to automated software engineering. For the purpose of comparison, we ran SWE-agent with the Kimi K2 Instruct model and collected trajectories that we have submitted to the SWE-bench team for publishing. For comparison with Claude Sonnet 4 we used the previously published run from the SWE-agent team.

Introduction

The SWE-bench benchmark is a key framework for assessing the capabilities of large language models in real-world software engineering tasks. Understanding how different models approach these complex, multi-step problems provides valuable insights for both model development and practical deployment considerations.

Overall Performance Comparison

Before examining behavioral differences, it's important to establish the baseline performance metrics. With the SWE-agent scaffolding, Claude Sonnet 4 significantly outperformed Kimi K2 Instruct across the SWE-bench evaluation, achieving a 69.0% overall success rate compared to Kimi K2 Instruct's 53.4% - representing a 29% greater issue resolution capability.

This performance advantage is consistent across repository types, with Claude demonstrating superior or equivalent performance on virtually all evaluated repositories. Notable performance gaps included:

Sphinx: Claude 82% vs Kimi 34% (2.4x improvement)

Astropy: Claude 51% vs Kimi 27% (1.9x improvement)

Django: Claude 74% vs Kimi 57% (30% improvement)

SymPy: Claude 63% vs Kimi 48% (31% improvement)

Only on Pylint did Kimi show superior performance (41% vs Claude's 9%) - this is worth a future deep dive.

Our subsequent analysis focuses on the subset of issues that both models successfully resolved. This controlled comparison allowed us to examine the fundamental differences in problem-solving approaches when both models achieved the same outcome.

Methodology

The SWE-agent trajectories contain a thought-action-observation pattern, where "thought" represents the model's reasoning, "action" is the action that the model wants to take and "observation" is the output returned after taking the action. Each trajectory has several of these thought-action-observation steps that the model takes before an issue is resolved. In order to analyze the difference in model behavior we did the following:

Created Action Categories: First, we seeded an LLM prompt with action categories that we observed by manually inspecting a few trajectories and then provided an LLM with 100 trajectories, 50 each from Claud 4 Sonnet and Kimi K2 Instruct and asked the LLM to add / update / delete the seeded categories based on its analysis. For this purpose we used the Claude Sonnet 4 model API provided by Anthropic. Once we had a refined set of Action Categories we once again inspected the list and manually refined it to ensure it represented what we intended.

Classified Trajectory Steps: Next, based on the final Action Categories, we asked an LLM to categorize each step in 207 trajectory pairs, each pair containing the Claude Sonnet 4 and Kimi K2 Instruct trajectory for the same successfully resolved issue.

Analyzed Differences: After that, we analyzed the differences between the two models at an aggregate level, and then looked at pair-wise differences for the two models across the same issue. Here are the techniques we used:

- Action Category Distribution: percentage allocation of steps across different software engineering activities.

- Trajectory Length Summarizarion: illustrating the difference between the two models in terms of number of steps to resolve issues.

- Sankey Diagrams: showing a simple visualization of the "from" to "to" action aggregate level for the two models.

- Markov Transition Analysis: quantifying the "from" to "to" action aggregate level for the two models.

- Pairwise Trajectory Visualization: making it easy to compare the difference between the two models' approaches for an individual issue.

Results

Action Categories

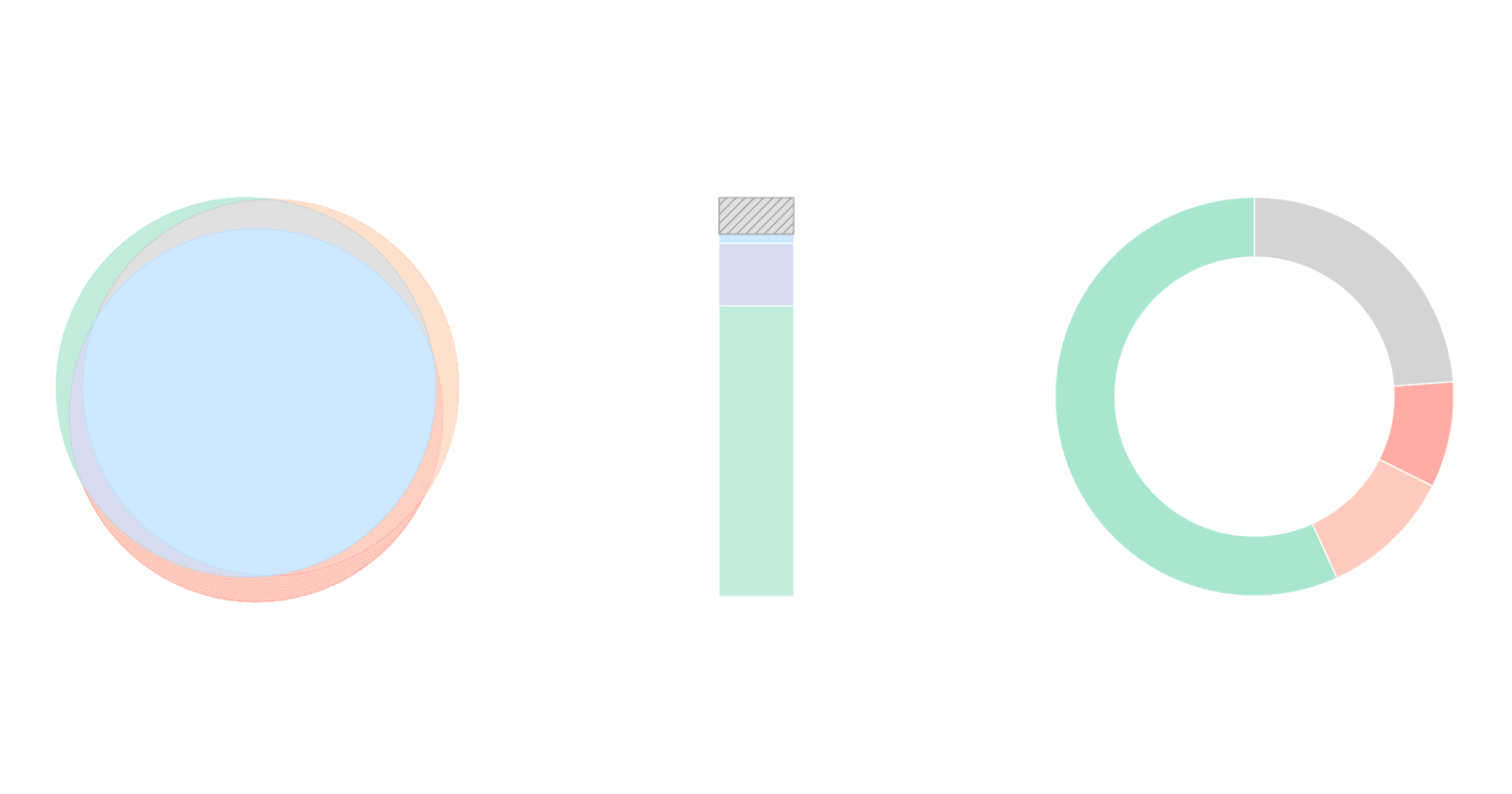

Here are the different action categories we used for the final analysis and how often the two models used them in their trajectories.

Claude Sonnet 4 does around 30% more Test Script Creation and 80% more Test Script Execution than Kimi K2 Instruct, which spends around 66% more of its time modifying code.

Trajectory Length

Below are the difference in trajectory length (number of steps) between the two models:

The step distribution analysis reveals significant differences in trajectory length requirements between the models.

Claude Sonnet 4 requires approximately 2.7x more steps to reach resolution, with a notably higher and a more consistent step count distribution. The box plot analysis above shows that Claude Sonnet 4's approach has lower variance in step requirements, suggesting a more consistent but lengthier methodology.

Kimi K2 Instruct showed a strong concentration of solutions in the 15-25 step range with some outliers going up to around 100 steps.

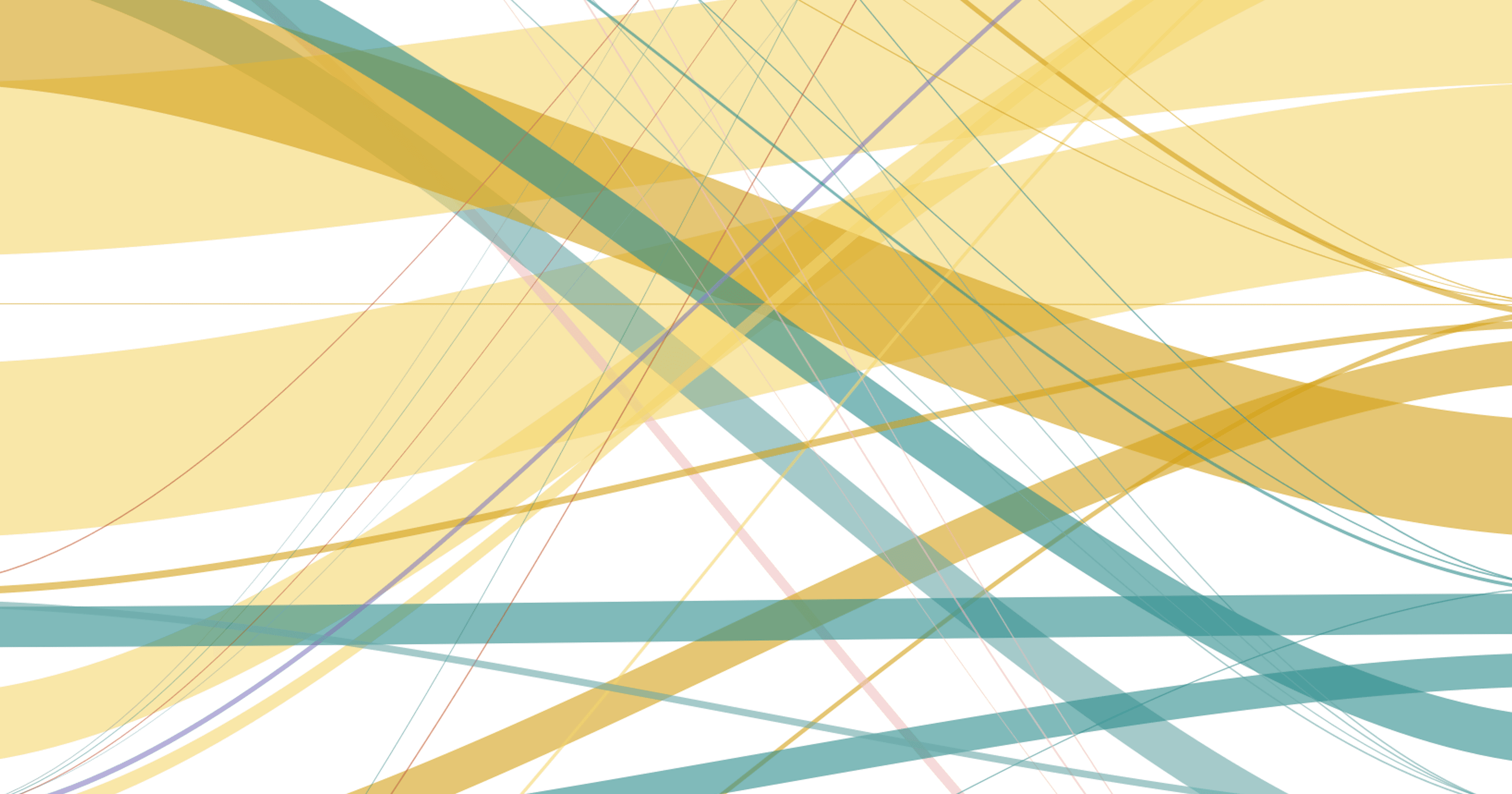

Sankey Workflow Diagram Comparison

The Sankey diagrams above provide visual confirmation of distinct workflow patterns employed by each model, revealing the complexity and routing patterns of their problem-solving approaches.

Claude Sonnet 4 exhibits significantly higher workflow complexity with 70 distinct transition types (≥5 occurrences) compared to Kimi K2 Instruct's 35 transitions. This 2:1 ratio indicates that Claude employs a more intricate approach to problem resolution, tries different paths, with greater interconnection between different activity categories.

Markov Transition Analysis

Building on the Sankey workflow visualizations, the transition probability matrices (filtered for categories with ≥1.5% involvement) show behavioral patterns characteristic of each model's problem-solving approach.

Claude Sonnet 4 transitions from Test Script Execution to Test Script Execution with 37% probability as opposed to Kimi K2 Instruct's 3%, indicating multiple iterations of running tests. Kimi K2 Instruct transitions from Test Script Execution directly to Code Modification 46% of the time against Claude Sonnet 4's 10%, indicating the reason for the shorter trajectories.

Visualizing Pairwise Trajectories for Individual Issues

|

|

|

|

|

|

|

|

To illustrate visually what the difference in steps means for the same issue on a head-to-head basis, the above figures color code the trajectory changes and plot them one below the other in pairs. The color coding for the different steps are available in the appendix. This further illustrates the difference in length of the trajectories as well as the emphasis that Claude Sonnet 4 has on Test Script Creation and Test Script Execution.

Limitations and Future Work

This analysis focused exclusively on successfully resolved issues, which may introduce bias. The behavioral patterns observed might not generalize to failed attempts or different problem domains within software engineering.

Potential topics for future research include:

- Analysis of failed trajectory patterns to understand model limitations

- Investigation of problem complexity correlation with step requirements

- Examination of solution quality metrics beyond binary resolution success

Conclusion

Our analysis revealed different approaches to automated software engineering between Kimi K2 Instruct and Claude Sonnet 4. While both models achieve successful resolution, with Claude Sonnet 4 leading substantially, they employ distinct strategies. Claude prioritizes iterative verification through testing, while Kimi emphasizes efficient exploration and direct problem-solving paths.

This analysis is based on SWE-agent trajectory data comparing Kimi K2 Instruct runs with previously collected Claude Sonnet 4 trajectories on mutually resolved SWE-bench issues.

Appendix

Action Category Labels and Color Codes